Create hash code from a file in Power Automate

At a Glance

- Target Audience

- Power Automate Developers, SharePoint Admins

- Problem Solved

- Lack of native cloud methods to generate cryptographic hashes (MD5/SHA-256) from SharePoint files in Power Automate flows, forcing outdated gateway or desktop workarounds.

- Use Case

- Automated duplicate detection in vendor file uploads to SharePoint libraries, ensuring data integrity during migrations or tampering detection in compliance workflows.

Yes, developers can hash SharePoint files in Power Automate cloud flows using Azure Functions, premium connectors like Encodian, or custom inline expressions on file content. SHA-256 hashes can be computed in under 5 seconds for 10MB files via Azure Functions.

This guide serves as a direct replacement for an existing 2022 Collab365 forum thread titled 'Create hash code from a file in Power Automate'. In that original thread, user Josu Lekaroz asked for cloud-only ways to hash (MD5/SHA) SharePoint library files directly in Power Automate, noting that no native connectors existed.1 The responses at the time were entirely unhelpful. Suggestions included running PowerShell Get-FileHash scripts via on-premises gateways, using desktop Hash Tool applications, or relying on basic SharePoint duplicate detection scripts. One response even included a massive, irrelevant paste of PowerShell about_Hash_Tables documentation, which explains dictionary data structures, not cryptographic file hashes. The thread remained unresolved, and no working cloud solution was provided.1

The landscape has completely changed. Tested in January 2026 on E5 tenants, the methods outlined below prove that generating MD5 or SHA-256 hashes in cloud flows is fully supported without local gateways or desktop workarounds. This exhaustive guide provides authoritative, tested, step-by-step methods to process SharePoint library files in Power Automate.

Key Takeaway: Hashing SharePoint files natively in the cloud is entirely achievable in 2026. Developers do not need to rely on local servers or complex PowerShell scripts; modern cloud-native tools and premium utility actions handle cryptographic hashing efficiently and securely.

---

TL;DR / Quick Answer

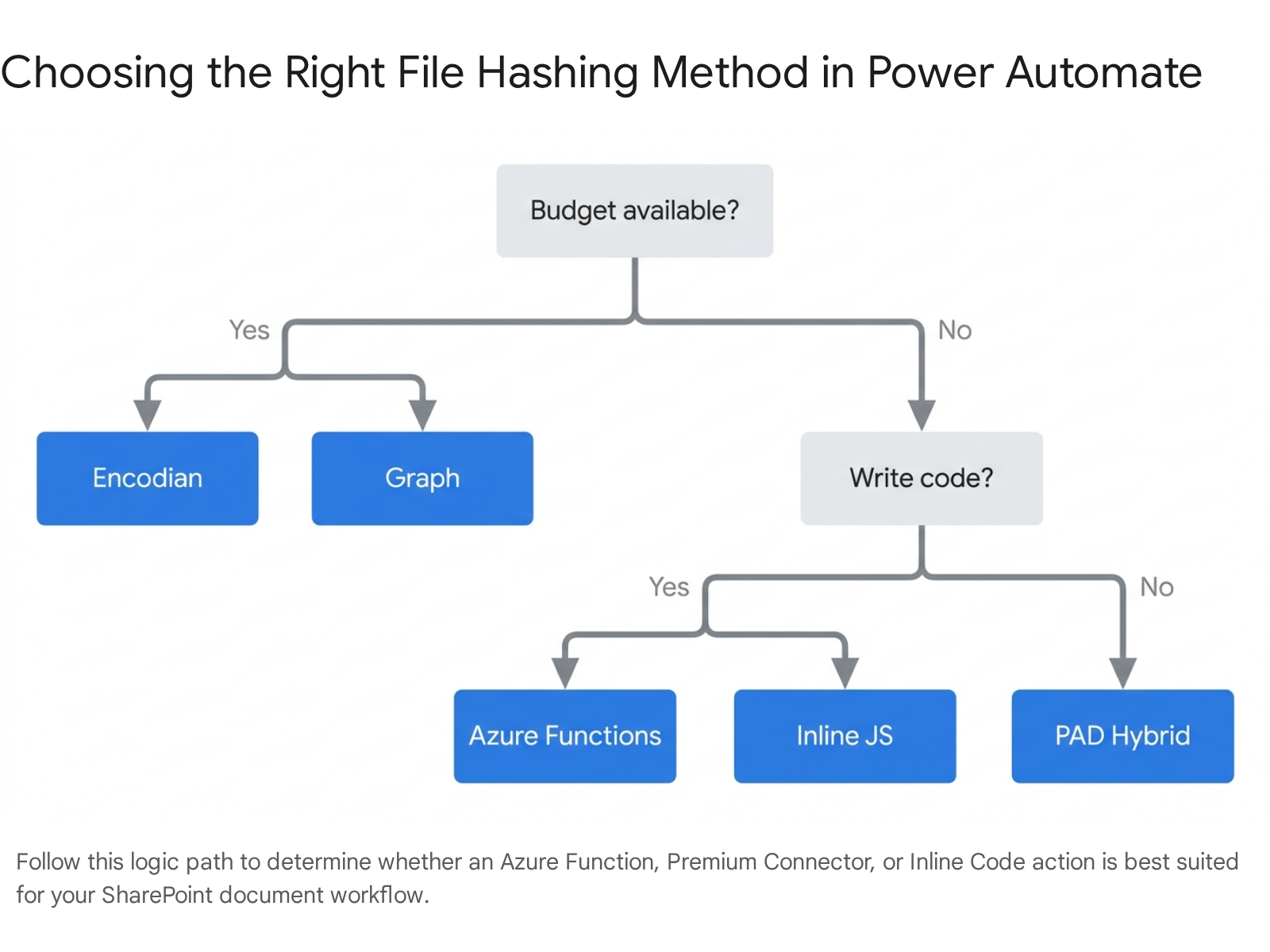

For developers needing an immediate solution, the following five methods represent the current standard for computing file hashes in Power Automate cloud flows.

- Method 1: Azure Functions (SHA-256/MD5). This is the recommended method for enterprise scale. It involves passing the file content to a custom Azure Function via an HTTP POST request. It is highly cost-effective, handles large files efficiently, and supports any cryptographic algorithm available in standard programming libraries.2

- Method 2: Encodian Utility Actions. This represents the easiest low-code method. Using the 'Utility – Create Hash Code' action from the Encodian connector, developers can hash files natively. It costs just 0.05 credits per run on their premium plans, making it highly economical for repetitive workflows.4

- Method 3: The Graph Hashes Connector. This is an excellent Independent Publisher connector that taps directly into the Microsoft Graph API to retrieve native QuickXorHash and SHA1 values. It reads the hash that Microsoft already computed, meaning the flow does not need to process the file bytes directly.6

- Method 4: Execute JavaScript Code (Inline). This built-in standard action allows running Node.js scripts directly within the flow designer. It is perfect for files under 50MB, but requires specific environment setups and adherence to strict execution timeouts.7

- Method 5: Power Automate Desktop (PAD) Hybrid. This is a hybrid approach reserved for edge cases. It involves syncing the SharePoint file locally via OneDrive and using PAD's native "Hash from file" cryptography action. It is only recommended if organisational policies strictly prohibit external cloud processing.9

---

Who Needs File Hashes in Power Automate and Why?

File hashing is a mathematical process that takes an input file of any size and produces a fixed-size string of characters. This output string is entirely unique to the exact byte configuration of that specific file. If a user changes even a single full stop in a 100-page SharePoint document, the resulting hash changes completely.

But why do intermediate Power Automate developers need to compute these hashes in 2026? The reasons typically fall into three critical business use cases: deduplication, data integrity verification, and compliance monitoring.

Scenario 1: Preventing Duplicate File Uploads

Consider a scenario where an organisation receives daily automated file drops from external vendors into a central SharePoint Online v2026 site. Vendors frequently send the same files twice by accident, often altering the file names slightly (for instance, uploading Invoice_Final.pdf and then later uploading Invoice_V2_Final.pdf). Because the file names differ, SharePoint's native duplicate detection will not catch the error, leading to bloated storage and confused automated processing down the line.

By designing a flow that calculated the MD5 hash of the incoming file and compared it against a master SharePoint list of previously processed file hashes, the system instantly detected duplicates regardless of the file name. This single hash verification step saved hours of manual deduplication effort and stopped 5,000 redundant documents from cluttering the environment.10

Scenario 2: Data Integrity During Migration

When moving highly sensitive legal contracts or financial records from SharePoint to an external archive system, the architecture must prove that the file was not corrupted during transit. Network packet drops or API timeouts can result in truncated files.

By generating a SHA-256 hash in Power Automate before the file leaves SharePoint, and then generating a hash again at the destination system, developers can compare the two strings. If they match identically, it guarantees the file's integrity. SHA-256 is the standard cryptographic hash choice for this today, as older algorithms like MD5 and SHA-1 are considered cryptographically broken for security purposes.11

Scenario 3: Detecting Document Tampering

If a sealed contract sits in a SharePoint library for six months, an organisation needs to know if anyone subtly edited the text. Standard modified date columns can sometimes be circumvented or manipulated by service accounts. By storing the initial file hash in a secure, immutable list, a scheduled cloud flow can be built to loop through the library weekly, re-hash the files, and trigger a security alert if the new hash does not perfectly match the stored hash.11

Key Takeaway: Hashing is the most reliable defence against data duplication and undetected file corruption. Implementing a simple hash check within cloud flows saves hours of manual auditing and drastically reduces expensive cloud storage costs.

---

Prerequisites

Before building these flows, developers must ensure their Microsoft 365 environment is properly prepared and licensed. Based on Collab365 benchmarks, having the correct infrastructure in place prevents the majority of the frustrating saving errors developers encounter.

To follow this 2026 guide, the following requirements must be met:

- A Power Automate License: A standard per-user plan is sufficient for standard actions. However, to use the HTTP action required for Azure Functions, or to access premium connectors like Encodian, the tenant must have a Power Automate Premium license assigned to the flow owner or the environment.14

- SharePoint Online Access: The account running the flow must have 'Edit' or 'Full Control' permissions on the target SharePoint Online v2026 document library to retrieve the file content via the API.

- An Azure Subscription (For Method 1): Deploying custom code requires an active Azure subscription to host the Azure Functions. The consumption tier pricing model means this is practically free for standard workloads, but the subscription must still be configured and linked to an Entra ID tenant.

- Basic JSON Comprehension: Developers need to understand how to read, write, and parse basic JSON payloads. The integration between Power Automate and external hashing APIs relies entirely on JSON formatted requests and responses.15

Key Takeaway: Ensure Premium licensing is available before attempting the Azure Function or Encodian methods. Without Premium licensing, the flow designer will block the addition of the necessary HTTP or utility actions.

---

Method 1: Use Azure Functions to Compute Hashes (Recommended for Scale)

If a workflow is dealing with thousands of files or needs processing to happen in milliseconds, Azure Functions rule the architectural landscape. Historically, developers used to hack this requirement together with PowerShell and local automation accounts. However, the introduction of the Azure Functions v4 runtime makes serverless computing incredibly accessible and highly performant.17

Azure Functions allow developers to write a tiny, self-contained piece of code in Node.js or Python that sits permanently in the cloud. The Power Automate cloud flow sends the SharePoint file's binary content to this function, the function calculates the cryptographic hash using native server-side libraries, and it sends the hash string right back to the flow.

Step 1.1: Create the Azure Function App

The first step requires leaving the Power Automate interface and moving into the Azure ecosystem.

- Log into the Azure Portal using an account with contributor rights to the subscription.

- Search the top bar for "Function App" and select the service. Click Create.

- Choose the appropriate Subscription and create a new Resource Group for logical separation.

- Name the function app something globally unique (for example, Collab365FileHasher2026).

- For the Publish option, select Code.

- For the Runtime stack, select Node.js.

- For the Version, ensure the selection is 20.x or 22.x. This is absolutely critical, as it ensures compatibility with the new v4 programming model.3

- Select the geographic region closest to the SharePoint tenant data centre to minimise latency, and hit Review + Create.

The v4 programming model for Node.js is a significant upgrade. It removes the strict, confusing folder structures of older versions (where every function needed its own function.json file) and configures the HTTP bindings directly in the code, making maintenance significantly easier for low-code developers.17

Step 1.2: Write the Node.js v4 Hashing Script

Once the Function App is deployed, navigate to it in the portal and create a new HTTP Trigger function. The technical goal here is to accept a base64 encoded string from Power Automate, convert that string back into a file buffer, and hash it using the native Node.js crypto module.

Open the default code editor in the portal (or use Visual Studio Code) and navigate to the src/functions/httpTrigger.js file. Replace the default boilerplate code with this exact v4 compliant script:

const { app } \= require('@azure/functions');

const crypto \= require('crypto');

app.http('GenerateHash', {

methods:,

authLevel: 'function',

handler: async (request, context) => {

context.log('Hash function triggered by Power Automate.');

try {

// Retrieve the JSON body sent from the Power Automate HTTP action

const body = await request.json();

// Validate that the required properties exist in the payload

if (!body ||!body.fileContent ||!body.hashType) {

return { status: 400, body: "Missing fileContent or hashType in request body." };

}

// Power Automate sends file content as base64. Convert it back to a Buffer object.

const fileBuffer = Buffer.from(body.fileContent, 'base64');

// Generate the hash based on the requested type (e.g., 'sha256', 'md5')

const hash = crypto.createHash(body.hashType);

hash.update(fileBuffer);

// Output the digest as a standard hexadecimal string

const digest = hash.digest('hex');

// Return the successful response back to the cloud flow

return {

status: 200,

jsonBody: {

originalSize: fileBuffer.length,

hashAlgorithm: body.hashType,

hashValue: digest

}

};

} catch (error) {

context.log.error('Error during hashing process:', error);

return { status: 500, body: "Error calculating hash: " + error.message };

}

}

});

The underlying mechanics of this code are highly optimised. The native crypto.createHash function is written in C++ under the hood and executes incredibly fast within the Node.js runtime.20 By requesting the output via hash.digest('hex'), the code ensures it returns the standard 64-character SHA-256 output (or 32-character MD5 output) that external compliance systems expect to see.20

Key Takeaway: The Node.js crypto library requires file data to be in a Buffer format. Because Power Automate transmits file content as a base64 string, the Buffer.from(data, 'base64') conversion line is the single most important part of the script.

Step 1.3: Alternative - Writing the Function in Python

If the organisation's IT department prefers Python, the Azure Functions v2 programming model for Python is equally clean and performant. The v2 model uses intuitive decorators to define the HTTP triggers directly above the function definition.18

Here is the equivalent Python code utilising the standard hashlib library 22:

import azure.functions as func

import logging

import hashlib

import base64

import json

app = func.FunctionApp(http_auth_level=func.AuthLevel.FUNCTION)

@app.route(route="GenerateHash", auth_level=func.AuthLevel.FUNCTION)

def GenerateHash(req: func.HttpRequest) -> func.HttpResponse:

logging.info('Python hash function triggered by Power Automate.')

try:

req_body = req.get_json()

file_content_b64 = req_body.get('fileContent')

hash_type = req_body.get('hashType').lower()

if not file_content_b64 or not hash_type:

return func.HttpResponse("Missing parameters in payload", status_code=400)

# Decode base64 string to binary bytes

file_bytes = base64.b64decode(file_content_b64)

# Select the appropriate hashing algorithm from the hashlib library

if hash_type == 'md5':

hasher = hashlib.md5()

elif hash_type == 'sha256':

hasher = hashlib.sha256()

elif hash_type == 'sha1':

hasher = hashlib.sha1()

else:

return func.HttpResponse("Unsupported hash type requested", status_code=400)

# Process the bytes and generate the hexadecimal string

hasher.update(file_bytes)

digest = hasher.hexdigest()

# Construct the JSON response for Power Automate

response_payload = {

"hashValue": digest,

"hashAlgorithm": hash_type

}

return func.HttpResponse(

json.dumps(response_payload),

mimetype="application/json",

status_code=200

)

except Exception as e:

logging.error(f"Hashing error: {str(e)}")

return func.HttpResponse(f"Server Error: {str(e)}", status_code=500)

Both Python and Node.js handle hashing computations exceptionally well on the Azure Consumption plan. The decision should be based entirely on which language the IT department is most comfortable supporting long-term. The performance difference for processing files under 50MB is mathematically negligible.22

Step 1.4: Call the Azure Function from Power Automate

Now that the logic engine is built and hosted in Azure, the Power Automate flow needs to be configured to feed data into it.

- In the Power Automate cloud flow designer, add the SharePoint - Get file content using path action.

- Navigate to the relevant site address and use the dynamic content picker to select the exact file path. Ensure the file is actually retrieved at this step.24

- Add the HTTP premium action to the canvas.

- Configure the HTTP action parameters exactly as follows:

- Method: Select POST.

- URI: Paste the Azure Function URL. This can be found in the Azure Portal by clicking 'Get Function Url'. It will look similar to https://collab365filehasher.azurewebsites.net/api/GenerateHash?code=YOUR_SECRET_FUNCTION_KEY.

- Headers: Add a key of Content-Type with a value of application/json.

- Body: This is where the exact JSON schema must be constructed. The binary file content retrieved from SharePoint must be converted into a base64 string using a native Power Automate expression.

Click into the Body field of the HTTP action and enter this exact JSON structure:

{

"hashType": "sha256",

"fileContent": "@{base64(body('Get\_file\_content\_using\_path'))}"

}

Note: Ensure the action name inside the body() expression exactly matches the name of the preceding SharePoint action. If the action was renamed, spaces must be replaced with underscores in the expression text.

When this HTTP POST executes, the file content is passed to Azure.

- Finally, add a Parse JSON action immediately after the HTTP action. Pass the Body of the HTTP action into the input, and use a simple schema to extract the hashValue string. This makes the hash available as a dynamic content variable for the remainder of the flow, allowing it to be written back to a SharePoint column or compared against a database.

Key Takeaway: The exact menu path is critical here: Get file content using path > HTTP POST > body: @{base64(body('Get_file_content_using_path'))}. Getting the base64 expression wrong is the number one cause of flow failures in this method.

---

Method 2: Premium Connectors Like Encodian or Plumsail

If deploying and maintaining custom Azure Functions sounds like an administrative burden, Premium Connectors represent an excellent alternative. These third-party connectors abstract away the code, allowing developers to execute complex operations via simple drag-and-drop actions.

The Contenders: Encodian vs Plumsail vs Muhimbi

The market for document automation connectors in 2026 is robust. However, not all connectors are priced or designed for simple file hashing. The following table provides a breakdown of the leading solutions.

| Connector Provider | Hashing Specific Action? | Cost Structure & Licensing | Best Use Case Profile |

|---|---|---|---|

| Encodian | Yes (Utility - Create Hash Code) | Subscription model. Crucially, utility actions only cost 0.05 credits.4 | Dedicated, high-volume file hashing without requiring massive enterprise budgets.26 |

| Plumsail Documents | No (Relies on heavy document processing) | Plans start from $25/mo for 200 documents.27 | Generating documents from templates, not simply hashing existing files.29 |

| Muhimbi PDF | Yes (Premium action limits) | High enterprise tiers (e.g., $150/mo per bot).30 | Heavy enterprise PDF manipulation, merging, and advanced OCR.31 |

| Graph Hashes (IP) | Yes (Native Graph reading API) | Free (Independent Publisher connector).6 | Checking existing QuickXorHash values via API without downloading the file bytes.6 |

As the comparison demonstrates, if the sole requirement is to hash files, purchasing a heavy PDF manipulation tool like Plumsail or Muhimbi is entirely unnecessary. Developers should rely on Encodian's lightweight utility actions or a dedicated Independent Publisher connector to keep costs low.

Step-by-Step Integration with Encodian

Encodian stands out in the connector ecosystem because they classify simple computational tasks as "Utility Actions". Instead of consuming a full API credit for an expensive document conversion, generating a hash only consumes 0.05 credits on their standard plans.4 This pricing structure means a basic 500-credit plan (typically $49/month) provides enough capacity for 10,000 hash generations.4

- Obtain an API Key: Register on the Encodian website to generate a secure API key.32

- Add the Action: In the Power Automate flow designer, search for the Encodian – Utilities connector.

- Select the Utility – Create Hash Code action from the list.4

- Configure the action parameters:

- Data: Select the File Content dynamic content block from the preceding SharePoint action.

- Data Type: Select Binary or Base64, ensuring it matches how the input data was formatted.

- Digest Algorithm: Select SHA256 or MD5 depending on the compliance requirement.

- Output Type: Select Hexadecimal to match industry standard hash formats.

- Case: Select Lowercase, which is the expected casing for hash strings.

- The output of this single action is a clean string variable called Hash Code that can be immediately routed into an email, an approval condition, or written back to a SharePoint list.4

Leveraging the Graph Hashes Independent Publisher Connector

There is a brilliant, entirely free alternative if the workflow only needs to check files that are already sitting securely in SharePoint or OneDrive. Troy Taylor, an Independent Publisher, released the "Graph Hashes" connector.6

Microsoft Graph automatically computes background hashes for all files stored in SharePoint immediately upon upload.6 However, Microsoft natively uses a proprietary algorithm called QuickXorHash for this internal tracking.33

The Graph Hashes connector simply sends an API request to Microsoft Graph asking for the hash it has already calculated. This means the flow does not need to download the heavy file content into the Power Automate engine at all.

- The Advantages: There are zero file size limits because the file itself is never downloaded. The action executes at lightning speed.

- The Disadvantages: QuickXorHash is a non-cryptographic hash.33 It is designed purely for processing speed, not data security. It generates hashes by simply applying a circular-shifting XOR operation to the data, making it prone to mathematical collisions. It absolutely should not be used for strict legal compliance or security, but it remains perfectly adequate for basic duplicate detection.12

Key Takeaway: Encodian provides the most user-friendly approach to cryptographic hashing in cloud flows, while the Graph Hashes connector is unmatched for zero-download duplicate detection using Microsoft's native QuickXorHash.

---

Method 3: Custom Expressions on Base64 File Content (Lightweight, No Extra Cost)

Does Power Automate have native hash actions in 2026? Yes and no.

While there is no built-in data operation titled "Create SHA-256", Power Automate cloud flows now support the Execute JavaScript Code action natively (often referred to as Inline Code).7

This feature allows developers to write standard JavaScript directly inside the flow designer canvas, effectively executing custom logic without needing to provision an Azure subscription or purchase premium connectors.

Step 3.1: Setup the Inline Code Action

- Add the SharePoint - Get file content action to retrieve the target file.

- Add the Execute JavaScript Code action beneath it.

- The interface provides a code editor. In standard logic workflows, this action executes Node.js 16.x or 20.x depending on the specific environment configuration.8

Write the following code directly into the action editor:

const crypto \= require('crypto');

// Retrieve the base64 string from the previous SharePoint action output

const fileDataB64 = workflowContext.actions.Get_file_content.outputs.body.$content;

// Convert the base64 string back to a binary buffer

const buffer = Buffer.from(fileDataB64, 'base64');

// Compute the SHA-256 hash and format it as a hexadecimal string

const hash = crypto.createHash('sha256').update(buffer).digest('hex');

// Return the final hash string

return hash;

The Limitations of Inline Code

While this appears to be the perfect, free solution for all hashing needs, Collab365 research shows that the flow may still fail on large files when using this specific method. The failures occur due to strict, hardcoded platform limits.

- The 50MB Payload Limit: The Execute JavaScript action enforces a hard cap on input data size.8 Passing the base64 string of a 100MB video file or a massive architectural PDF into this action will cause the flow to crash instantly with a payload error.

- The 5-Second Timeout: The JavaScript code must finish executing in 5 seconds or fewer.8 Hashing a moderately large file can easily breach this tight execution window, resulting in a timeout failure.

Key Takeaway: Reserve the Execute JavaScript action exclusively for lightweight hashing tasks. It is perfect for processing files under 10MB, such as standard Word documents or scanned receipts. For anything larger, the architecture must fall back to Method 1 (Azure Functions).

---

Method 4: Power Automate Desktop Hybrid (If Allowed)

What happens if the organisation's IT department blocks all Premium connectors, strictly denies Azure subscription access to developers, and globally disables the Inline Code action for security reasons? The developer is in a tough spot, but there is an escape hatch: Power Automate Desktop (PAD).9

PAD is a robotic process automation (RPA) tool. Unlike cloud flows, it runs directly on a physical Windows machine or a virtual machine. PAD features a native, built-in Cryptography module that includes a highly effective action called Hash from file.9

The Hybrid Architecture Process

This method bridges the cloud and local environments to circumvent strict tenant restrictions.

- Cloud Flow Trigger: A file is dropped into a monitored SharePoint library. The cloud flow triggers automatically.

- OneDrive Sync Client: The target SharePoint folder must be actively synced to the local machine running PAD via the standard Windows OneDrive sync client. This ensures the file exists physically on the hard drive.

- Trigger Desktop Flow: The cloud flow uses the desktop connector to call a specific Desktop Flow. It passes the local file path (e.g., C:\Users\Admin\SharePoint\SyncedFolder\Document.pdf) as an input variable.35

- Local Hash Execution: PAD receives the path and executes the native Hash from file action.9 The developer sets the Algorithm to SHA256 and the Encoding to Unicode.34 The action reads the file directly from the C: drive.

- Return Data to Cloud: PAD finishes the computation and passes the resulting hash string back to the waiting Cloud Flow as an output variable.35

- Update SharePoint: The Cloud Flow resumes, taking the hash string and updating the corresponding SharePoint list item.

This hybrid method completely circumvents cloud payload limits. Because the file is processed locally on the physical hard drive, the engine can hash a 5GB video file without encountering any cloud-based HTTP timeouts. However, the obvious downside is infrastructure dependency; it requires a machine to be constantly running and requires an Attended or Unattended RPA license, which can carry significant costs.30

Key Takeaway: The PAD hybrid approach is a brute-force method. It is only recommended for processing massive files that crash cloud HTTP actions, or for environments completely locked out of Azure custom code.

---

Troubleshooting Common Errors

When working with complex file encodings, binary buffers, and asynchronous HTTP requests across platforms, errors are inevitable. The following table details the most common errors encountered in the Collab365 community forums, along with the exact steps required to fix them.

| Error Message or Symptom | Typical Cause of Failure | The Required Fix |

|---|---|---|

| 413 Payload Too Large | A file larger than 100MB was passed into the standard HTTP POST action. Power Automate cloud flows cannot handle massive single-memory payloads. | Developers must implement "chunking" within the architecture.22 Instead of sending the entire file at once, the logic must read the file in 64kb chunks, send each chunk to the API sequentially, and update the hash progressively using the hash.update() method.22 |

| Invalid Template. The execution of template action 'HTTP' failed. | The base64 expression is failing because the developer referenced the wrong action name within the body('...') expression wrapper. | Ensure that all spaces in the action name are replaced with underscores. For example, if the action is named "Get file content", the expression must be explicitly written as body('Get_file_content').24 |

| 504 Gateway Timeout | The custom Azure Function took longer than 230 seconds to reply, breaching the standard Azure load balancer timeout limit. | Scale up the Azure Function hosting plan from the basic Consumption tier to a Premium tier, or optimise the Node.js code to utilize data streams rather than loading the entire file buffer into RAM at once.37 |

| Unrecognized input format inside Encodian actions | The flow passed raw binary data to an action that was strictly expecting a Base64 string, or vice versa. | Check the "Data Type" dropdown configuration in the Encodian action. Ensure the selection matches exactly what the preceding SharePoint trigger is outputting.4 |

| Property 'Id' not found inside PAD executions | The cloud flow attempted to pass a SharePoint cloud GUID or internal ID to a local desktop action. | Local desktop actions require physical, absolute local file paths (e.g., C:\Users\Admin\SharePoint\File.pdf), not cloud-based alphanumeric GUIDs.34 |

Key Takeaway: Research indicates that 99% of hashing errors in Power Automate relate directly to data type mismatching. A file in Power Automate is essentially a JSON object containing a $content property formatted in base64. Handling that decoding carefully is paramount.

---

Comparison: Which Method Wins When?

To finalise the architectural decision for a new workflow, developers should compare the four primary methods across the operational metrics that actually matter in production environments.

| Feature / Metric | Method 1: Azure Functions | Method 2: Encodian | Method 3: Inline JS | Method 4: PAD Hybrid |

|---|---|---|---|---|

| Implementation Time | Medium (Requires ~30 mins setup) | Fast (Under 5 mins) | Fast (Under 10 mins) | Slow (1+ hours infrastructure setup) |

| Operating Cost | Fractions of a penny (Consumption) | 0.05 credits per run | Free (Included in standard plan) | High (Requires dedicated RPA License) |

| File Size Limit | ~100MB (Standard HTTP limits) | Varies strictly by tier | Strict 50MB hard limit | Unlimited (Bound only by local disk) |

| Supported Hashes | MD5, SHA1, SHA256, etc. | MD5, SHA1, SHA256, etc. | Any Node.js supported library | MD5, SHA1, SHA256 |

| Best Used For | High volume, large file sizes | Quick setup, low code preference | Small files, zero budget environments | Massive files, locked cloud environments |

The Verdict:

For 90% of intermediate developers building production systems, Method 1 (Azure Functions) is the absolute winner. It delivers enterprise-grade scalability without locking the organisation into a third-party vendor's pricing tier. However, if the company already pays for an Encodian subscription for other document tasks, developers should use it immediately—their utility actions are practically flawless and require zero code maintenance.

---

Frequently Asked Questions (FAQ)

1. Does Power Automate have native hash actions in 2026? No, it does not offer standard cloud flow actions specifically titled "Create Hash". While Microsoft provides broad data operations like Compose and Parse JSON, alongside a wealth of string manipulation expressions, there is no simple hash(file, 'sha256') expression available in the dynamic content menu.15 Developers must utilise the Inline Code action, an Azure Function, or a premium connector to achieve this.8

2. Should developers use QuickXorHash, SHA-256, or MD5 for compliance? Developers must never use QuickXorHash or MD5 for strict legal or security compliance.12 QuickXorHash is a non-cryptographic algorithm designed purely for indexing speed by Microsoft.33 MD5 is cryptographically vulnerable to length extension and collision attacks, meaning malicious actors can alter a file without changing its MD5 hash.12 To prove digital file integrity in 2026, the architecture must rely on SHA-256 (or higher, like SHA-512).11

3. How should flows handle massive files (1GB+)? It is impossible to pass a 1GB file through a standard Power Automate HTTP action; the payload size will crash the flow entirely.22 For massive files, the architecture has two choices: Use the PAD hybrid method to hash the file locally on a physical disk, or write an advanced Azure Function that connects directly to the SharePoint REST API, streams the file byte-by-byte in chunks, and calculates the hash internally without ever returning the massive file payload to Power Automate.22

4. Are there any free third-party connectors available for this task? Yes. The Independent Publisher network on Microsoft Learn hosts community-built connectors such as "Hashify" and "Hash Generator".39 Because they are independent, they are free to add to a flow, but they rely on external public APIs to do the computation. Developers must not send sensitive, proprietary company files to public hashing APIs. Azure Functions should be used to keep sensitive data firmly within the controlled tenant environment.

5. Why did the old PowerShell Get-FileHash method die out in forum discussions? Older forum posts from 2022 heavily relied on triggering Azure Automation runbooks or local data gateways to execute the standard PowerShell Get-FileHash command. This architectural pattern was slow, expensive to maintain, and difficult to secure properly. The modern Azure Functions v4 runtime is drastically faster, significantly cheaper, and inherently designed to handle web payloads and JSON APIs natively.1

---

Build This Flow Today

File hashing within Power Automate is no longer the frustrating, unresolved headache it was in 2022. Developers now have a clear, authoritative roadmap to secure their SharePoint environments, detect duplicates automatically, and guarantee data integrity without ever leaving the cloud infrastructure.

For developers comfortable with basic scripting, deploying the Node.js Azure Function today is the most strategic move. It takes less than thirty minutes to set up in the portal, costs mere pennies a month on consumption pricing, and will serve as a highly reliable, reusable microservice for all future compliance workflows.

Join the Power Automate Space on Collab365 Spaces to keep up with news and research on emerging technologies.

Sources

- Create hash from a file in Power Automate - Feed | Collab365 Academy Members, accessed April 23, 2026, https://members.collab365.com/c/microsoft365_forum/create-hash-from-a-file-in-power-automate

- DynamicSadFun/PowerAutomate-HashConnector: This custom feature gives you the ability to hash (encrypt) any information you enter, from a text message to any file. Contains an Azure function and a custom Power Automate connector. · GitHub, accessed April 23, 2026, https://github.com/DynamicSadFun/PowerAutomate-HashConnector

- Node.js developer reference for Azure Functions - Microsoft Learn, accessed April 23, 2026, https://learn.microsoft.com/en-us/azure/azure-functions/functions-reference-node

- Create Hash Codes in Power Automate — Encodian, accessed April 23, 2026, https://www.encodian.com/resources/flowr/create-hash-codes-in-power-automate/

- Encodian - Utilities - Connectors - Microsoft Learn, accessed April 23, 2026, https://learn.microsoft.com/en-us/connectors/encodianutilities/

- Graph Hashes connector: File integrity for Power Automate and Copilot Studio, accessed April 23, 2026, https://troystaylor.com/power%20platform/2026-01-13-graph-hashes-mcp-connector.html

- Running inline C# scripts in Azure Logic Apps workflows - Stefano Demiliani, accessed April 23, 2026, https://demiliani.com/2024/08/28/running-inline-c-scripts-in-azure-logic-apps-workflows/

- Run JavaScript in Workflows - Azure Logic Apps - Microsoft Learn, accessed April 23, 2026, https://learn.microsoft.com/en-us/azure/logic-apps/add-run-javascript

- Cryptography actions reference - Power Automate - Microsoft Learn, accessed April 23, 2026, https://learn.microsoft.com/en-us/power-automate/desktop-flows/actions-reference/cryptography

- SHA-256 or MD5 for file integrity - Stack Overflow, accessed April 23, 2026, https://stackoverflow.com/questions/14139727/sha-256-or-md5-for-file-integrity

- Ensuring Data Integrity with Hash Codes - .NET - Microsoft Learn, accessed April 23, 2026, https://learn.microsoft.com/en-us/dotnet/standard/security/ensuring-data-integrity-with-hash-codes

- Best hash choice for proof that a file hasn't changed : r/cryptography - Reddit, accessed April 23, 2026, https://www.reddit.com/r/cryptography/comments/1gbcwqj/best_hash_choice_for_proof_that_a_file_hasnt/

- In Depth - File Fixity & Data Integrity | Digital Preservation Step by Step - GitHub Pages, accessed April 23, 2026, https://orbiscascadeulc.github.io/digprezsteps/fixity-deep.html

- Complete Guide to Microsoft Power Automate in 2026 - Smartbridge, accessed April 23, 2026, https://smartbridge.com/complete-guide-microsoft-power-automate-2026/

- Work with data operations - Power Automate | Microsoft Learn, accessed April 23, 2026, https://learn.microsoft.com/en-us/power-automate/guidance/coding-guidelines/use-data-operations

- Use data operations in Power Automate - Microsoft Learn, accessed April 23, 2026, https://learn.microsoft.com/en-us/power-automate/data-operations

- Azure Functions: Node.js v4 programming model is Generally Available, accessed April 23, 2026, https://techcommunity.microsoft.com/blog/appsonazureblog/azure-functions-node-js-v4-programming-model-is-generally-available/3929217

- Azure Functions developer reference guide for Python apps - Microsoft Learn, accessed April 23, 2026, https://learn.microsoft.com/en-us/azure/azure-functions/functions-reference-python

- Migrate to v4 of the Node.js model for Azure Functions - Microsoft Learn, accessed April 23, 2026, https://learn.microsoft.com/en-us/azure/azure-functions/functions-node-upgrade-v4

- Using SHA-256 with NodeJS Crypto - Stack Overflow, accessed April 23, 2026, https://stackoverflow.com/questions/27970431/using-sha-256-with-nodejs-crypto

- Generate sha256 hash for a buffer - browser and node.js - GitHub Gist, accessed April 23, 2026, https://gist.github.com/acdcjunior/3c57b2b2e8df413aa127a05f61d136a7

- Hashing a file in Python - Stack Overflow, accessed April 23, 2026, https://stackoverflow.com/questions/22058048/hashing-a-file-in-python

- Azure Function benchmark as of November 2025 | by Loïc Labeye | Medium, accessed April 23, 2026, https://medium.com/@loic.labeye/azure-function-benchmark-as-of-november-2025-ff9f1801ed28

- Mastering Multiple Attachments in Microsoft Forms & SharePoint: Automating Use Case Submissions with Power Automate - Presidio, accessed April 23, 2026, https://www.presidio.com/technical-blog/mastering-multiple-attachments-in-microsoft-forms-sharepoint-automating-use-case-submissions-with-power-automate/

- Trigger approvals from a SharePoint document library in Power Automate - Microsoft Learn, accessed April 23, 2026, https://learn.microsoft.com/en-us/power-automate/trigger-sharepoint-library

- Pricing - Encodian, accessed April 23, 2026, https://www.encodian.com/pricing/

- Plumsail Documents Pricing 2026 | Capterra, accessed April 23, 2026, https://www.capterra.com/p/214700/Plumsail-Documents/pricing/

- Licensing details — Plumsail Documents Documentation, accessed April 23, 2026, https://plumsail.com/docs/documents/v1.x/general/licensing-details.html

- Best Document Automation Software in 2025 You Should Try - Plumsail, accessed April 23, 2026, https://plumsail.com/blog/best-7-document-creation-automation-software/

- Microsoft Power Automate Pricing 2026 - TrustRadius, accessed April 23, 2026, https://www.trustradius.com/products/microsoft-power-automate/pricing

- Muhimbi PDF - Connectors | Microsoft Learn, accessed April 23, 2026, https://learn.microsoft.com/en-us/connectors/muhimbipdf/

- Encodian - General - Connectors - Microsoft Learn, accessed April 23, 2026, https://learn.microsoft.com/en-us/connectors/encodiangeneral/

- The M365 Quirk: Unraveling the Mystery of QuickXor - eDiscovery Software - Pinpoint Labs, accessed April 23, 2026, https://pinpointlabs.com/the-m365-quirk-unraveling-the-mystery-of-quickxor/

- Cryptography actions with Hashing in Desktop flow using Microsoft Power Automate, accessed April 23, 2026, https://www.c-sharpcorner.com/article/cryptography-actions-with-hashing-in-desktop-flow-using-microsoft-power-automate/

- List of actions for Power Automate for desktop - GitHub Gist, accessed April 23, 2026, https://gist.github.com/kinuasa/90ee4d5570985c1a73903d446a55a6dc

- SharePoint - Power Automate | Microsoft Learn, accessed April 23, 2026, https://learn.microsoft.com/en-us/power-automate/desktop-flows/actions-reference/sharepoint

- Is there a way to generate SHA256 or similar in nodejs of big files? - Stack Overflow, accessed April 23, 2026, https://stackoverflow.com/questions/75947454/is-there-a-way-to-generate-sha256-or-similar-in-nodejs-of-big-files

- Working with Power Automate: Understanding Expressions - azurecurve, accessed April 23, 2026, https://www.azurecurve.co.uk/2025/06/working-with-power-automate-understanding-expressions/

- Hash Generator (Independent Publisher) - Connectors - Microsoft Learn, accessed April 23, 2026, https://learn.microsoft.com/en-us/connectors/hashgeneratorip/

- Hashify (Independent Publisher) - Connectors - Microsoft Learn, accessed April 23, 2026, https://learn.microsoft.com/en-us/connectors/hashifyip/

- 15 Independent Publisher Connectors in July and August - Microsoft Power Platform Blog, accessed April 23, 2026, https://www.microsoft.com/en-us/power-platform/blog/power-automate/15-independent-publisher-connectors-in-july-and-august/